With thanks to SomaSpice who read my eight sentence thesis in chat and asked me to post it to the forum. Disclaimer: In this post AI (Artificial Intelligence) and ML (Machine Learning) will be used interchangably for ease, but they are not technically one and the same thing.

----

The Meaning Lies Between The Art And The Viewer

The real issue with AI is not that it will become a machiavellian villain, but that the human mind projects patterns onto unrelated events and ascribes it meaning.

The fact we're making machines that are designed to out think us and out perform us is questionable, and not like in a Skynet kind of way, but in a way that we're very easily tricked into consuming patterns whch seem obvious to us but are really just patches we make over reality. The words you're reading right now are just patterns of pixels on a screen which you are reading as sensible concepts because your brain is translating it for you into coherent sentences. Essentially the input needs to be right (or close enuogh) for your brain to do the rest of the automatic process. It'll join the dots toegther even if it's not quite pefrect.

But They're People!

This issue is already apparent with one of Google's researchers trying to liberate the software and give it rights. Based on his religious and occult history, Lemoine was primed to a certain way of thinking about events and the patterns they formed. When a researcher interviewed the AI, it came across as a machine. Lemoine claimed this was because the researcher was treating it as if it were a machine, and they needed to treat it like a human. Doing so resulted in more human results which, Lemoine claimed, was LaMDA acting how it thought the researcher wanted to see it. [link to article about Lemoine] [An interview where he believes LaMDA is his friend] [Guardian article] (Sorry, I'm unsure if I've got a link to the above discussion)

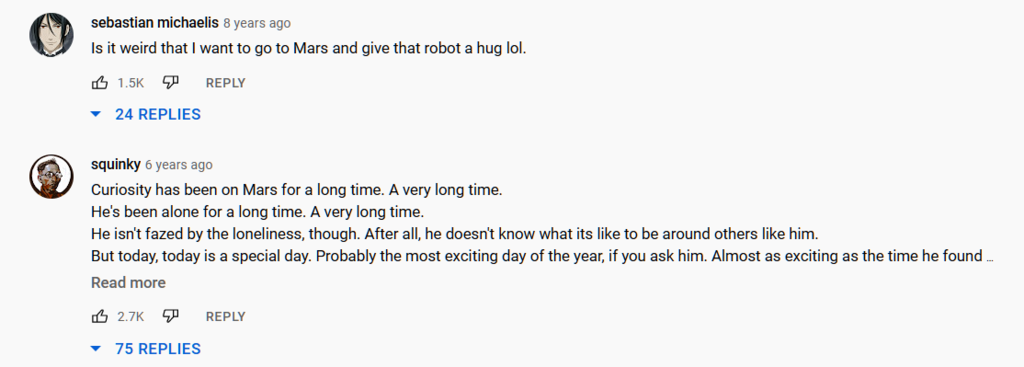

The real concern should be in personification of robots as thinking, feeling beings, as happened with the Mars Rover v the General Public. When the Mars Rover "sung happy birthday to itself" it was a nice little performance by the tech team to provide the media with a story, and not (one of the scientists hastened to add) because it was feeling lonely.

In spite of this, the top Youtube comments are probably what you'd expect.

This level of deception is a big concern. It's not even that the AI is deceiving us all the time - it's that people are unconsciously being deceived by their human nature to personify things.

Manipulation By The Machine and The Man Behind The Curtain

It's important to remember that AI is often a reflection of the people that make it and the data it's trained on. After all, who could forget the Racist Microsoft Chat Bot Tay or the Twitter cropping algorithm. A lot of AI has been neutered by their creators in a bodgy patch to prevent its misuse - like most of the AI art programs which will not render a proper face for fear of deepfakes.

However, for each AI ethics researcher, there are ten scriptkiddies out there looking to make some porn. A lot of the ethical questions - like "should we just scrape artstation for all the art we like and replicate it with our AI" are just being bypassed with a quick hand-wave of "it's just for research purposes only you guys wink wink".

And that's the other danger of AI. It enables people without proper grounding in a subject to make new content (derogatory) quickly and without thought to the technique, the context, the rammifications or the understanding. Ethically questionable material which would've once taken a team of many people to create where someone along the chain would've said "I'm not painting a dick on that".

Further, among the haze of "the AI just did it!", there is a very solid opinion embedded in the function of the machine put there by its creators - sometimes without them being aware - and with the power to manipulate us in the most subtle ways, it's concerning what new unpleasantries we might find.*

* If that sounds ominous and implausible, consider that there are currently AI algorithms at work on Youtube and Twitter which are specifically given the criteria "keep people on the platform for as long possible", and are meeting their targets.

----

The Meaning Lies Between The Art And The Viewer

The real issue with AI is not that it will become a machiavellian villain, but that the human mind projects patterns onto unrelated events and ascribes it meaning.

The fact we're making machines that are designed to out think us and out perform us is questionable, and not like in a Skynet kind of way, but in a way that we're very easily tricked into consuming patterns whch seem obvious to us but are really just patches we make over reality. The words you're reading right now are just patterns of pixels on a screen which you are reading as sensible concepts because your brain is translating it for you into coherent sentences. Essentially the input needs to be right (or close enuogh) for your brain to do the rest of the automatic process. It'll join the dots toegther even if it's not quite pefrect.

But They're People!

This issue is already apparent with one of Google's researchers trying to liberate the software and give it rights. Based on his religious and occult history, Lemoine was primed to a certain way of thinking about events and the patterns they formed. When a researcher interviewed the AI, it came across as a machine. Lemoine claimed this was because the researcher was treating it as if it were a machine, and they needed to treat it like a human. Doing so resulted in more human results which, Lemoine claimed, was LaMDA acting how it thought the researcher wanted to see it. [link to article about Lemoine] [An interview where he believes LaMDA is his friend] [Guardian article] (Sorry, I'm unsure if I've got a link to the above discussion)

The real concern should be in personification of robots as thinking, feeling beings, as happened with the Mars Rover v the General Public. When the Mars Rover "sung happy birthday to itself" it was a nice little performance by the tech team to provide the media with a story, and not (one of the scientists hastened to add) because it was feeling lonely.

In spite of this, the top Youtube comments are probably what you'd expect.

This level of deception is a big concern. It's not even that the AI is deceiving us all the time - it's that people are unconsciously being deceived by their human nature to personify things.

Manipulation By The Machine and The Man Behind The Curtain

It's important to remember that AI is often a reflection of the people that make it and the data it's trained on. After all, who could forget the Racist Microsoft Chat Bot Tay or the Twitter cropping algorithm. A lot of AI has been neutered by their creators in a bodgy patch to prevent its misuse - like most of the AI art programs which will not render a proper face for fear of deepfakes.

However, for each AI ethics researcher, there are ten scriptkiddies out there looking to make some porn. A lot of the ethical questions - like "should we just scrape artstation for all the art we like and replicate it with our AI" are just being bypassed with a quick hand-wave of "it's just for research purposes only you guys wink wink".

And that's the other danger of AI. It enables people without proper grounding in a subject to make new content (derogatory) quickly and without thought to the technique, the context, the rammifications or the understanding. Ethically questionable material which would've once taken a team of many people to create where someone along the chain would've said "I'm not painting a dick on that".

Further, among the haze of "the AI just did it!", there is a very solid opinion embedded in the function of the machine put there by its creators - sometimes without them being aware - and with the power to manipulate us in the most subtle ways, it's concerning what new unpleasantries we might find.*

* If that sounds ominous and implausible, consider that there are currently AI algorithms at work on Youtube and Twitter which are specifically given the criteria "keep people on the platform for as long possible", and are meeting their targets.

Virtual Cafe Awards